Beyond the Goldfish Bowl: Memory-Augmented LLMs and the Dawn of True Conversational Recall

Large Language Models (LLMs) have undeniably revolutionised how we interact with information and generate content. From drafting emails to coding complex algorithms, their capabilities are astounding. Yet, even the most powerful LLMs suffer from a fundamental limitation: a finite context window. This "attentional horizon" means they can only "remember" a certain amount of recent information (measured in tokens) when generating a response. Anything beyond that limit fades into oblivion, much like a goldfish's memory.

This constraint hinders their ability to engage in truly long-form conversations, process extensive documents, or maintain complex project-specific knowledge over time. But what if we could give these digital brains a long-term memory, an external hippocampus of sorts?

Enter Memory-Augmented LLMs (MaLLMs), a groundbreaking architectural shift promising to shatter these token limits and usher in an era of LLMs with vast, persistent recall.

The Tyranny of the Token Limit

Before diving into the solution, let's appreciate the problem. Traditional LLMs process information by encoding the entire input prompt (including past conversation turns or document sections) into a fixed-size representation. The self-attention mechanisms, while powerful, scale quadratically with the length of this input sequence. This means that doubling the context window doesn't just double the computational cost; it can quadruple it, making extremely long context windows prohibitively expensive and slow.

This limitation manifests in several ways:

Lost Context in Long Dialogues: The LLM forgets earlier parts of a lengthy conversation.

Inability to Process Large Documents: Analyzing entire books, research papers, or legal depositions in one go is often impossible.

Limited In-Context Learning: The number of examples or "demonstrations" you can provide to guide the LLM's behavior (few-shot prompting) is restricted by the token limit.

Decoupling for Depth: The Core Idea of MaLLMs

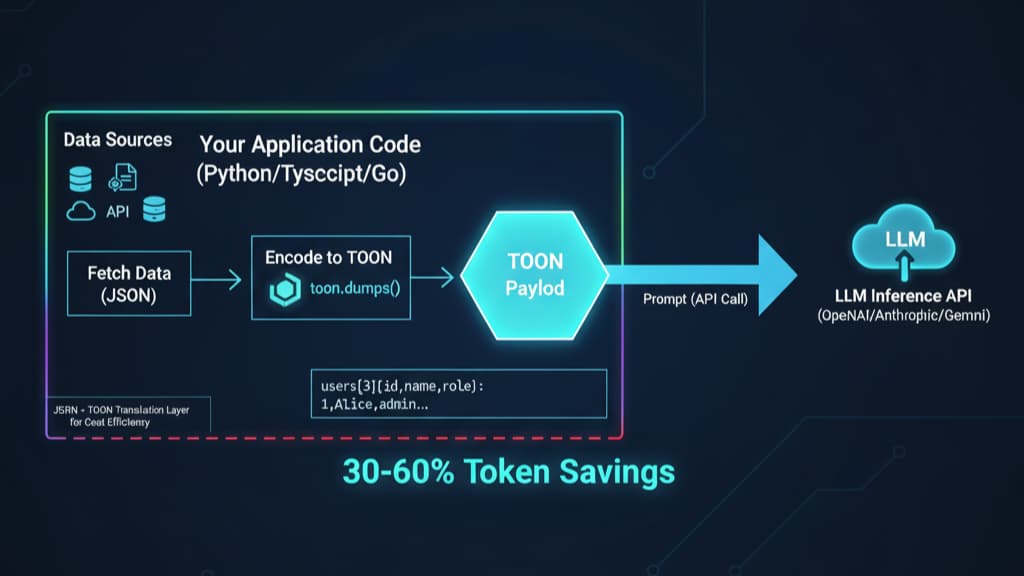

The core innovation behind Memory-Augmented LLMs is the decoupling of the LLM's core reasoning engine from a dedicated long-term memory module. Instead of trying to cram everything into the LLM's native, limited context, MaLLMs offload historical information to an external, efficiently accessible memory store.

This architecture typically involves:

A Core LLM: Often a powerful, pre-trained foundation model (like GPT, Llama, etc.). Crucially, this core LLM can remain "frozen," meaning its internal weights are not altered.

A Memory Encoder: Responsible for processing incoming information and converting it into a format suitable for storage in the long-term memory.

An External Memory Store: This could be a vector database, a key-value store, or another structured/unstructured data repository. It's designed to hold vast amounts of information.

A Retriever Mechanism: This is the intelligent component that, given a current query or context, searches the external memory and fetches the most relevant historical information.

An Aggregator/Context Constructor: This component takes the retrieved memories and the current short-term context, and combines them into a prompt that the core LLM can process effectively.

Spotlight on LongMem: A Practical Implementation

A prime example of this architecture is the LongMem framework, as detailed in the paper "LongMem: Long-term Memory for Large Language Models" (Li et al., 2023, arXiv:2306.07174). LongMem cleverly utilizes:

A Frozen LLM as a Memory Encoder: The pre-trained LLM itself (or a part of it) is used to create meaningful embeddings (numerical representations) of text chunks that are then stored in the long-term memory. This leverages the LLM's inherent understanding of language to create rich, semantic representations.

An Adaptive Side-Network as a Retriever: This is a smaller, specialised neural network trained to learn how to best retrieve relevant memories. When the user provides a new prompt, this side-network queries the long-term memory (often a FAISS-like vector index for efficiency) and fetches the k-most relevant past interactions or document chunks.

Cache-Based Memory Construction: The retrieved memories are then prepended to the current input query, forming an augmented context that is fed to the frozen LLM for processing.

The beauty of LongMem lies in its efficiency and adaptability. By keeping the powerful base LLM frozen, it avoids the colossal costs associated with retraining such models. Instead, only the lightweight side-network retriever needs to be trained, making the system far more agile. LongMem has demonstrated its ability to effectively extend context lengths to 50,000+ tokens and beyond, a significant leap from standard LLM capabilities.

The Training Pipeline: Teaching the Retriever to Remember

The training process for a MaLLM like LongMem focuses on honing the retriever's ability to identify and fetch genuinely useful information. This typically involves:

Data Preparation: Creating training instances that consist of a query, a desired response, and a large corpus of potential memories (e.g., previous turns of a conversation, sections of a document).

Retriever Training: The adaptive side-network (retriever) is trained to predict which memory chunks are most relevant to the current query for generating the target response. This can be framed as a learning-to-rank problem or by using reinforcement learning signals based on the quality of the LLM's output when provided with certain retrieved memories.

No Base Model Retraining: A key advantage, as emphasized, is that the base LLM's parameters remain untouched. This not only saves immense computational resources but also preserves the general capabilities of the foundation model. The system learns to use the LLM better, rather than changing the LLM itself.

In-Context Learning at Scale: The Power of Extended Demonstrations

One of the most exciting implications of MaLLMs is their ability to supercharge in-context learning (ICL). ICL is the remarkable ability of LLMs to learn new tasks or adapt their behaviour based on a few examples (demonstrations) provided directly in the input prompt.

With traditional LLMs, the number of such demonstrations is severely limited by the token window. If your examples are lengthy or you need many of them for a complex task, you're out of luck.

MaLLMs obliterate this barrier. They allow for:

Caching Vast Demonstration Libraries: You can store an extensive library of high-quality demonstrations, task instructions, or stylistic examples in the external memory.

Dynamic Retrieval of Relevant Examples: When a new query arrives, the retriever can fetch the most pertinent demonstrations from this vast cache.

Enhanced Task Adaptation: The core LLM then receives the new query along with a rich set of highly relevant examples, enabling it to perform the task more accurately and in the desired style, all without any explicit fine-tuning.

Imagine an LLM assisting with customer support. Its external memory could store thousands of past successful issue resolutions. When a new support ticket comes in, the MaLLM retrieves similar past cases and their solutions, providing the LLM with powerful context to generate a helpful and accurate response.

Beyond Token Limits: The Future is Remembered

Memory-Augmented LLMs represent a significant step towards creating AI systems that can learn, reason, and converse with a deeper understanding of history and context. By decoupling memory from computation, frameworks like LongMem offer a scalable and efficient path to:

Processing and understanding entire books, research papers, or codebases.

Maintaining coherent, long-term conversations that span days or weeks.

Building highly personalised AI assistants that remember user preferences and interaction history.

Enabling more sophisticated few-shot and zero-shot learning by providing richer contextual cues.

While challenges remain in optimising retrieval speed, ensuring the relevance of retrieved memories, and managing the ever-growing memory stores, the trajectory is clear. We are moving away from LLMs with fleeting attention spans towards intelligent systems possessing a robust and accessible long-term memory – a crucial component for any truly intelligent entity, biological or artificial. The future of LLMs is not just about bigger models, but smarter memory.